In 2023 and 2024, the phrase “we can’t get enough GPUs” became the defining constraint in the AI industry. Companies were spending billions and waiting months for NVIDIA hardware. CEOs were publicly complaining about GPU shortages the way people complained about toilet paper shortages in 2020 — with a mix of frustration and dark humor.

But why? What makes these chips so essential for AI? And what is actually different about a GPU compared to the CPU sitting in your laptop? Here’s a clear explanation of the hardware that’s running the AI revolution.

CPUs vs GPUs: The Fundamental Difference

Your laptop or desktop computer has a CPU — Central Processing Unit. CPUs are designed to be incredibly good at running complex, sequential tasks. A modern CPU might have 8 to 32 cores, each capable of running sophisticated logic with lots of branching and decision-making. CPUs are generalists: they run your operating system, your browser, your spreadsheet software, your games, all simultaneously.

A GPU — Graphics Processing Unit — was originally designed for a very different purpose: rendering the millions of pixels on your screen. That task requires doing an enormous number of relatively simple calculations in parallel. To color every pixel in a 1080p image, you need to perform about 2 million independent calculations at the same time.

So GPUs are built very differently from CPUs. Instead of 8 powerful cores, a modern GPU might have 10,000 smaller, simpler cores. They’re not good at complex sequential logic. But for doing the same operation on massive amounts of data simultaneously? They’re extraordinarily fast.

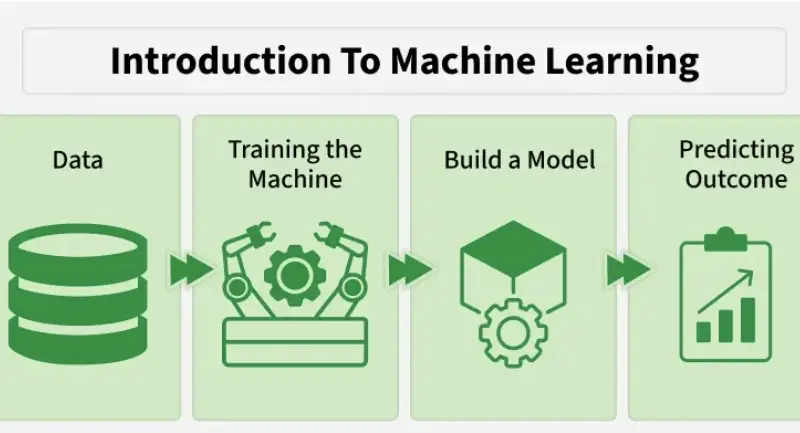

This turns out to be exactly what deep learning needs. Training a neural network is essentially matrix multiplication — multiplying large grids of numbers together, billions of times. Each multiplication is simple. But you need to do an astronomical number of them in parallel. GPUs were designed for exactly this kind of work, which is why they became the hardware of choice for AI years before the current boom.

Why NVIDIA? The CUDA Advantage

NVIDIA isn’t the only company that makes GPUs. AMD and Intel both make GPUs too. But NVIDIA dominates AI training and inference so completely that “GPU” in AI contexts almost always means NVIDIA GPU. The reason is CUDA.

In 2006, NVIDIA introduced CUDA — Compute Unified Device Architecture — a programming platform that lets software developers write programs specifically designed to run on NVIDIA GPUs. Before CUDA, using a GPU for non-graphics computation required complex workarounds. CUDA made it straightforward.

The AI research community built their frameworks — TensorFlow, PyTorch, JAX — on top of CUDA. Over 15+ years, an enormous ecosystem developed: CUDA libraries, tutorials, code examples, trained engineers. Switching to a different hardware platform doesn’t just mean buying different chips — it means rewriting software that took years to develop and losing the institutional knowledge of your engineering team.

This software moat is arguably more valuable than NVIDIA’s hardware advantages. AMD’s MI300X GPU has competitive hardware specs, but convincing the ML ecosystem to migrate away from CUDA is an enormous undertaking. Google has their own TPUs specifically to break this dependency, but even Google’s researchers still use NVIDIA GPUs for much of their work.

The H100: What Makes It Special

NVIDIA’s H100 is the chip that became the center of the AI hardware frenzy. Understanding why requires a bit of architecture context.

The H100 is built on NVIDIA’s Hopper architecture and manufactured on TSMC’s 4nm process. It contains 80 billion transistors — more than twice the number in a high-end CPU. But the numbers that matter for AI work are different:

80GB of HBM3 memory with 3.35 TB/s of memory bandwidth. Memory bandwidth is often the real bottleneck in AI work — you can have all the compute in the world, but if you can’t feed data to the cores fast enough, they sit idle. The H100’s HBM3 memory is stacked directly on the same package as the chip, reducing the distance data has to travel.

Tensor Cores are specialized processing units designed specifically for matrix multiplication — the core operation in deep learning. The H100’s 4th-generation Tensor Cores can perform mixed-precision matrix operations far faster than general compute cores could. For transformer model training, this is where most of the real performance comes from.

The Transformer Engine is a feature unique to Hopper-generation GPUs. Transformers — the architecture behind GPT, Claude, and virtually every modern large language model — require specific computational patterns. The Transformer Engine automatically adjusts numerical precision during training to maximize performance without sacrificing accuracy. For transformer model training, this provides up to a 6x speedup compared to the previous A100 generation.

NVLink 4 connects multiple H100s together with 900 GB/s of bidirectional bandwidth. Training large models requires clusters of GPUs working together. The faster they can share data with each other, the less time is wasted waiting for one GPU to send results to another. An H100 cluster with NVLink can behave almost like one massive GPU rather than many separate ones.

The Economics: Why Everyone Is Desperate for Them

A single H100 GPU costs roughly $30,000 to $40,000. Cloud providers charge $2 to $5 per hour to rent one. Training a large language model like GPT-4 reportedly required thousands of H100s running for weeks — compute costs alone in the tens to hundreds of millions of dollars.

For companies racing to build competitive AI products, having enough H100s is an existential business constraint. Microsoft secured access through its partnership with OpenAI. Google built their own TPU infrastructure. Meta publicly disclosed purchasing hundreds of thousands of H100s in 2024. Smaller AI startups have sometimes had to delay product launches simply because they couldn’t get enough compute.

NVIDIA’s gross margins on their data center products exceeded 80% in 2024. It’s one of the most profitable positions in the history of the semiconductor industry.

The Challengers: AMD, Google, and Custom Silicon

AMD MI300X: AMD’s strongest AI chip to date. The MI300X offers 192GB of HBM3 memory — more than twice the H100’s 80GB — which makes it particularly competitive for inference workloads where fitting large models into memory is the primary constraint. Microsoft Azure has deployed MI300X at scale. The software ecosystem is improving but still trails CUDA.

Google TPU v5: Google’s Tensor Processing Units are purpose-built for machine learning and have been used internally for years to train models like Gemini. TPUs are optimized for Google’s specific frameworks (JAX and TensorFlow) and are available through Google Cloud. They’re cost-competitive with H100s for suitable workloads but less flexible for general ML research.

AWS Trainium and Inferentia: Amazon built custom silicon specifically for training (Trainium) and inference (Inferentia) to reduce their dependence on NVIDIA and offer customers a cost-effective alternative. Inferentia2 offers competitive price-performance for production LLM inference workloads.

Groq: A startup that built a radically different architecture — the Language Processing Unit (LPU) — optimized purely for the inference phase of LLMs. Groq chips can run LLM inference dramatically faster than GPUs (they’ve demonstrated generating 500+ tokens per second for a 70B parameter model), but they lack the flexibility for training.

What’s Coming: Blackwell and Beyond

NVIDIA’s next-generation Blackwell architecture (the B100 and B200 chips) began shipping in 2024-2025. The B200 offers roughly 2-3x the performance of the H100 for transformer inference, with 192GB of HBM3e memory and a new NVLink Switch that connects up to 576 GPUs as a unified compute fabric.

The longer-term trajectory of AI hardware is moving toward specialization — chips designed for specific model architectures, specific precision levels, and specific deployment scenarios. The era of one GPU architecture dominating everything is likely to give way to a more diverse hardware landscape. But for now, if you’re building or training cutting-edge AI systems, you almost certainly need NVIDIA GPUs — and NVIDIA has no intention of giving that position up easily.